Welcome to Rocket.Chat Labs: our way of showing what is cooking in the R&D kitchen. No polished demos on curated datasets. No slide decks dressed up as evidence. Just real engineering, real data, and the honest story of what we found.

The first in a series where we open up our R&D process: what we built, how we tested it, and what we actually found. We are starting with something that goes to the heart of why Rocket.Chat exists: the problem of finding what matters, when it matters, inside a sea of messages.

The problem: Critical information, buried in plain sight

If you run any messaging platform at scale, you know this problem intimately.

Information goes missing. Not because it was never shared. Because it was shared in the wrong channel, or the right channel three months ago, or in a thread that branched off a conversation you were never part of. The knowledge is there. It is just buried.

Someone answered your exact question six weeks ago. Someone documented the fix. But when you need it, you cannot find it. You do not remember which channel it was in.

Traditional keyword search only works when you already know what you are looking for. In some organizations this means duplicated work. In others, it means something far more consequential, such as the following:

01 The commander who walked in without the full picture

Critical updates from three field teams exist in separate channels: a status report filed two days ago, a flag in a side thread, an update nobody escalated. None of it surfaces in time. Decisions get made on a partial picture.

02 The analyst who almost missed the pattern

The signals are spread across weeks of messages and threads from multiple teams. Manually piecing it together takes days. By the time the pattern is visible, the window to act has narrowed. The information existed. It just was not findable at the speed required.

03 The decision maker flying blind

Four active workstreams. Each team communicates. No single place to surface what matters. Operating on status updates hours old, filtered through layers of relay. The ground truth is sitting in the channels. They just cannot see it.

How Intelligent Search works: Intent search, not a keyword search

Before we get into what happens at scale, it helps to understand what Intelligent Search actually does, because it is fundamentally different from keyword search.

Traditional search matches words.

Type "VPN outage" and it looks for those exact words. If someone wrote "the tunnel went down" instead, you get nothing.

This works for filing cabinets. It does not work for the way people actually communicate in critical and high-stakes situations.

Here’s how Intelligent Search works:

01 Vector embeddings

Messages are converted into numerical representations where meaning is encoded as position in a high-dimensional space. Similar concepts end up near each other, regardless of exact wording.

02 Intent matching

"Authentication service is down" and "users can't log in" land near each other in this space. A plain-language question surfaces conversations that mean the same thing, not just conversations that use the same words.

03 Retrieval only

The system retrieves content that has been indexed. It surfaces what exists in the corpus. It does not generate, invent, or synthesize. When a result feels off, it is because the closest match in the corpus genuinely was not close. The system returned the best available answer. It did not invent one.

"The semantic search model does not hallucinate results. Everything it surfaces comes from your actual message history. The challenge at scale is not accuracy. It is ranking. As the corpus grows, more content competes for relevance, and surfacing the best match first becomes harder. That is the engineering problem we set out to measure."

Devanshu Sharma, Lead Research Engineer, Rocket.Chat R&D

Why scale makes it harder

Search quality is not just a technology problem. It is a scale problem.

A system that works well at 50,000 documents can quietly struggle at 500,000.

Most vendors benchmark on small, clean datasets and extrapolate. We wanted to know what actually happens when you push past a million documents. So we built a benchmark designed to feel like actual operational conditions.

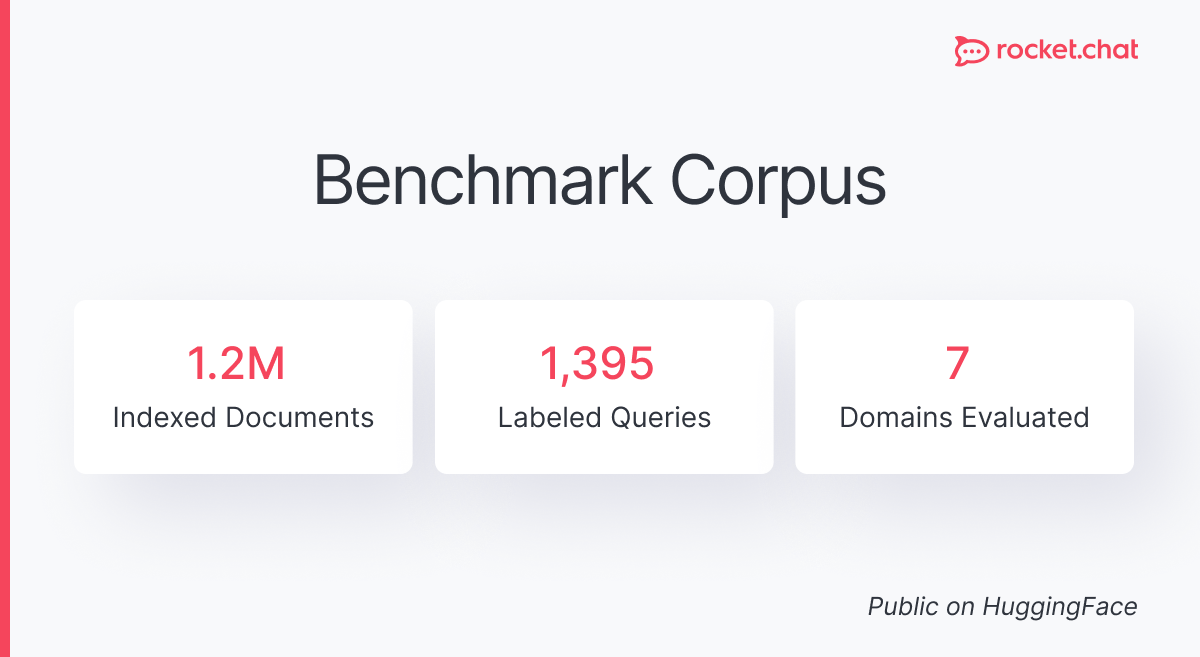

Our R&D team built a benchmark designed to stress Intelligent Search the way actual operations would. The corpus combines a large public conversational dataset with actual Rocket.Chat message exports, totaling 1,198,202 indexed documents. This is heterogeneous, conversational, real-world data across multiple domains. Not a clean dataset built to produce favorable numbers.

We evaluated retrieval quality using 1,395 labeled queries across seven domains, with ground truth defined per query. The benchmark dataset is publicly available on HuggingFace so anyone can download it and reproduce our results. The transparency is deliberate.

As for the embedding model, we used all-MiniLM-L6-v2, a compact, fast encoder as an honest baseline. The goal was to measure what the system actually does under real conditions. A stronger embedding model would likely push MAP toward 0.5 to 0.7. We didn't swap models to improve the number before publishing. This is a baseline. Not a demo.

“We wanted the benchmark to feel like a real operational environment, not a controlled experiment. That means noisy data, varied domains, and messages that look like things people actually send. Not queries designed to make the system look good.”

Devanshu Sharma, Lead Research Engineer, Rocket.Chat R&D

What we found: The honest numbers

We are going to be more candid than most vendors would be. Here is what the system did.

Under sustained load, with concurrent ingestion and query traffic at million-document scale:

- API response times stayed in the low single-digit milliseconds at the 95th percentile

- Error rates remained near baseline with no sustained bursts

- Worker failures were near zero

- The system absorbed traffic spikes through backpressure and batching without degradation

For defense organizations, government agencies, and critical infrastructure operators, this matters more than any retrieval benchmark.

A communication platform is mission-essential infrastructure. An AI feature that introduces latency spikes or instability into that stack is a liability, not a feature.

Intelligent Search does not compromise the operational envelope that teams depend on.

At around 380,000 documents, the system delivered strong results: a Mean Reciprocal Rank of 0.72, meaning the first relevant result was typically near the top of the list.

At the full 1.2 million document scale, that metric shifted to 0.56, still finding the right content, but with the top-ranked result sitting a bit further down the list on average.

This is expected behavior.

As the corpus tripled in size, the search space grew and more content competed for the top result. The system consistently retrieved the correct content. The challenge at 1.2 million documents was consistently ranking the best match first. This is expected behavior, and it gives us a precise target to improve against.

"A MRR of 0.56 over 1.2 million real-world conversational documents is a credible baseline, especially given the messiness and scale of the corpus. More importantly, it gives us a reproducible reference point for improvement. From here, changes such as stronger embedding models, hybrid retrieval, or reranking pipelines can be evaluated against the same baseline rather than judged by intuition or a smaller, cleaner test set."

- Devanshu Sharma, Lead Research Engineer, Rocket.Chat R&D

3 things worth paying attention to

First: the transparency itself is the point.

The organizations we serve operate under a higher standard of accountability. They do not adopt technology based on marketing demos. They need evidence. We are publishing real benchmarks against real data because trust in AI tooling should be earned with data, not slide decks. For teams that go through rigorous procurement and accreditation processes, this is the kind of evidence that matters.

Second: the operational stability story is underrated.

Most conversations about AI search focus on retrieval quality. But for teams running communication infrastructure that supports mission-critical operations, the question is not just "does it find the right thing?" It is "does adding this feature introduce risk into the systems my people depend on?" Low single-digit millisecond latency and near-zero error rates at million-document scale is our answer.

Third: this is a baseline, not a finish line.

We are sharing these results precisely because they represent the starting point for Intelligent Search. The benchmark gives us, and our community, a rigorous foundation for measuring every improvement we make from here. Better embedding models, hybrid retrieval strategies, reranking pipelines, all of these optimizations can now be evaluated against a real, public baseline. That is how engineering-led improvement works: you measure, you share, you iterate.

Why it matters

If you are evaluating AI-powered communication platforms: ask your vendors to show their benchmarks. Not a demo on a hundred documents. A test against a million. Not a controlled query on clean data, but a realistic mix of incident reports, operational threads, and cross-team coordination.

And ask whether adding AI search changes the operational profile of the system your people depend on every day.

We have done that work. The dataset is public. Anyone can check our work. And there is a lot more to come.

The full technical paper, including detailed metric tables and Grafana telemetry snapshots, is available from our R&D team. The benchmark dataset is published at huggingface.co/datasets/dnouv/intelligent-search-benchmark.

This is Rocket.Chat Labs. There is more coming.

Intelligent Search is just the beginning. We have also been building native federation based on the Matrix protocol, and we plan to share that story next: the technical decisions, the trade-offs, and why we believe we have built the most complete sovereign communications platform in the market.

That thread connects everything we are doing. Whether it is intelligent search, federated messaging, or the next capability we ship, the goal stays the same: give organizations full control over their communications, without compromises.

Rocket.Chat Labs is a series exploring how we build, test, and evolve the Rocket.Chat Secure CommsOS. From AI capabilities to native federation and beyond, we are opening up the engineering behind the platform. Follow along for there is a lot more in the kitchen.

Frequently asked questions about <anything>

- Digital sovereignty

- Federation capabilities

- Scalable and white-labeled

- Highly scalable and secure

- Full patient conversation history

- HIPAA-ready

for mission-critical operations

- On-premise and air-gapped ready

- Full control over sensitive data

- Secure cross-agency collaboration

%201.svg)

- Open source code

- Highly secure and scalable

- Unmatched flexibility

- End-to-end encryption

- Cloud or on-prem deployment

- Supports compliance with HIPAA, GDPR, FINRA, and more

- Supports compliance with HIPAA, GDPR, FINRA, and more

- Highly secure and flexible

- On-prem or cloud deployment